Overview

This article will provide a basic overview for IT Professionals to measure the IOPS Consumption load being experienced by their Windows application server. Information acquired using from this measurement will determine which Synology RackStation would best suit the needs of storage replacement for that specific Windows application server.

This article will provide a basic overview for IT Professionals to measure the IOPS Consumption load being experienced by their Windows application server. Information acquired using from this measurement will determine which Synology RackStation would best suit the needs of storage replacement for that specific Windows application server.

Problem: IT Professionals need to determine the amount of IOPS

Over the past few weeks, I’ve been asked this question many times by IT Professionals which is how to measure IOPS of a Windows-based Application Server. These Professionals would like to move the storage duties away from the physical server and move it towards a Synology RackStation. I last visited this topic with IOPS – Performance Capacity Planning Explained, unfortunately, from my research on the internet, there appears to be no advanced guide or principle to execute this task. What I’ve seen thus far is either proprietary software with high-pressure sales tactics, or a manual written by engineers that doesn’t explain application of the knowledge or incoherent information fragmented across several articles on the internet.

Over the past few weeks, I’ve been asked this question many times by IT Professionals which is how to measure IOPS of a Windows-based Application Server. These Professionals would like to move the storage duties away from the physical server and move it towards a Synology RackStation. I last visited this topic with IOPS – Performance Capacity Planning Explained, unfortunately, from my research on the internet, there appears to be no advanced guide or principle to execute this task. What I’ve seen thus far is either proprietary software with high-pressure sales tactics, or a manual written by engineers that doesn’t explain application of the knowledge or incoherent information fragmented across several articles on the internet.

Faced with insufficient information to measure IOPS from a Windows Server, I’ve decided to create my own procedure and give this out freely for everyone to use. IT Professionals can use this knowledge to determine their current IOPS consumption on their Windows application servers, or just use it as a diagnostic tool on their Windows application servers to monitor their current IOPS load of their existing storage array.

Procedure – Collecting data for IOPS measurement

- For the complete background information on how to create a data collection set, please refer to Microsoft TechNet – Windows Performance Monitor – Create a Data Collector Set from Performance Monitor

- Run perfmon.exe

- Proceed to \Performance\Data Collector Sets\User Defined

- Move the Mouse to the right field, Right Click, New Data Collector

- Name the new data collector and set the type to “Performance Counter Data Collector”, and click Next.

- Under Performance Counters, select the following values and proceed to the next wizard.

- Counters

- \Processor Information\%Processor Time

- \Memory\Available Mbytes

- \Logical Disk\

- Avg Disk Bytes/Read

- Avg Disk Bytes/Transfer

- Avg Disk Bytes/Write

- Avg Disk Sec/Read

- Avg Disk Sec/Transfer

- Avg Disk Sec/Write

- Current Disk Queue

- Disk Bytes/Sec

- Disk Read Bytes/Sec

- Disk Reads/Sec

- Disk Transfers/Sec

- Disk Write Bytes/Sec

- Disk Writes/Sec

- Hardware to be collected

- Processor Information

- Select the Total CPU

- Disk

- Select the correct disk to determine IOPS Load

- Processor Information

- Counters

- Set Sample Interval to 1 Second and proceed to the next page of the wizard

- Enable “Open properties for this data collector” and click finish

- On the next window, set the Log format to Comma Separated Values

- Enable Maximum Samples, set to 86500

- This is 24 Hours recording period, with an extra 100 seconds as an overhead. 24 hours is the minimum for this data collection – but for the best analysis, analyzing the IOPS load throughout an entire week is recommended. Some articles have recommended an entire month – but I believe this to be a bit excessive given the number of samples that this is gathering. I believe gathering data every second is needed, as it’s the best “resolution” for this data analysis.

- If sticking with the bare minimum analysis, it’s best to use a day where the most of the traffic is experienced on the Application server, such as on a Monday when everyone is returning from the weekend.

- Click apply

- The file will be saved under the default path of C:\PerfLogs\Admin\[CounterName]

- Close the counter properties and run the counter.

Description of Counters

- \Processor Information\%Processor Time

- References

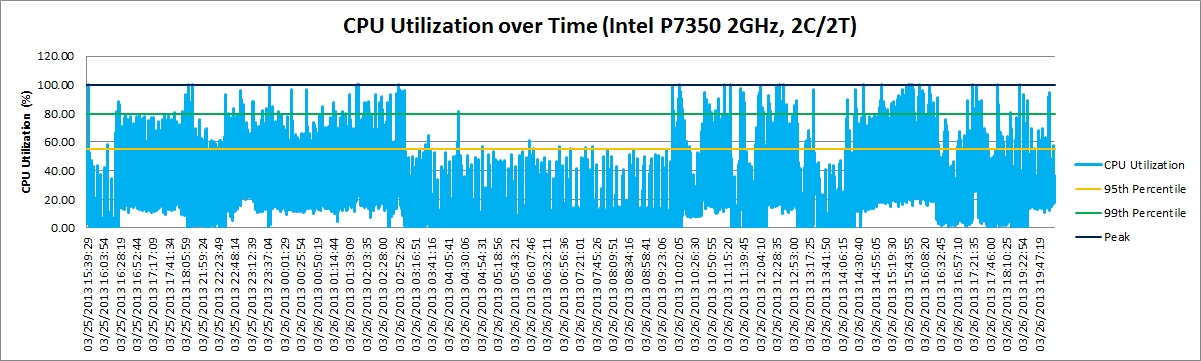

- From my experience – if the CPU utilization is over 80% utilized 95% of the time – there is a resource bottleneck on the application server, or a processor upgrade should be considered.

- Assuming that the system is properly optimized and if the CPU is at 100% utilization over 95% of the time, that means the IOPS data collected from the storage array cannot be used, as there is a bottleneck on the CPU which is affecting what the true IOPS could be on the disk.

- \Memory\Available Mbytes

- Available system memory

- In my experience – on a Windows system, if the system memory is below 5%, this is a cause for concern as it is indicative of insufficient RAM, and the IOPS data will be inaccurate. There is heavy use of virtual memory, which is obfuscating the IOPS data collection from the HDD. It won’t be possible to conduct an accurate assessment of the data collected, as there is now Application IOPS and Virtual Memory IOPS in the same data pool.

- \Logical Disk\

- References

- Avg Disk Bytes/Read

- Disk Bytes per Read IOP

- Avg Disk Bytes/Transfer

- Concurrent Disk Bytes per IOP

- Avg Disk Bytes/Write

- Disk bytes per Write IOP

- Avg Disk Sec/Read

- Disk Latency per Read IOP

- Avg Disk Sec/Transfer

- Disk Latency per Concurrent IOP

- Avg Disk Sec/Write

- Disk Latency per Write IOP

- Current Disk Queue

- A high Queue length is an indication of a bottleneck on the computer system

- Disk Bytes/Sec

- Disk Bytes per Second (Throughput)

- Disk Read Bytes/Sec

- Disk Read Bytes per Second

- Disk Reads/Sec

- Read IOPS

- Disk Transfers/Sec

- Concurrent IOPS

- Disk Write Bytes/Sec

- Disk Write Bytes per Second

- Disk Writes/Sec

- Write IOPS

Procedure – Processing the data

This next part of the process does require some more advanced techniques in Excel to lower the amount of processing time in Excel, such as Auto Fill Down or using Control and Arrow keys to navigate the Excel table to a specific Column/Row. The data analysis within Excel is preparing the data to be graph. Most of the analysis is based on the 95th percentile analysis which represents the needed capacity of a storage array. An example of this is network bandwidth capacity, just because a laptop has 1GbE doesn’t mean that it’s constantly using 1GbE 100% of the time. In my experience, less than 10% utilization is in actual use, for general purpose internet computing.

This next part of the process does require some more advanced techniques in Excel to lower the amount of processing time in Excel, such as Auto Fill Down or using Control and Arrow keys to navigate the Excel table to a specific Column/Row. The data analysis within Excel is preparing the data to be graph. Most of the analysis is based on the 95th percentile analysis which represents the needed capacity of a storage array. An example of this is network bandwidth capacity, just because a laptop has 1GbE doesn’t mean that it’s constantly using 1GbE 100% of the time. In my experience, less than 10% utilization is in actual use, for general purpose internet computing.

For this analysis, the chart will need to be setup to view 95%, 99%, and Peak Load. These metrics will help determine the need on the storage array, and what is the observed maximum stress on the storage array. Determining the peak load and frequency of the peak load will help aid in future capacity planning, and see if there is any concerns on the application.

To download an example of the final chart analysis, along with all of the raw data, please look at the measuring Windows IOPS sample file. This file is an example of data collected from my computer. Please note that the file is 47MB zipped, 83MB 42MB zipped, 140MB unzipped. It may take a while to open the file.

First thing that I did with the raw data is refined it; so I moved columns of data around so it’s easier for me to analyze. I also started to convert the data to appropriate units of measurements, such as milliseconds, or kilobyte.

From the refined data file, the following graphs will need to be generated. As in the example file, I would recommend keeping a separate worksheet for the graphs, and keep everything to scale. This will make it easier to analyze the data. I also suggest setting the graph colors manually, and use copy-n-paste for most of the graphs. This will ensure that the graphs remain visually consistent. The percentile values that I used are typically based on worst case scenario out of all three values (Write, Read, Concurrent), I’ll usually select the most extreme of cases as a conservative measure for analysis.

Procedure – Analyzing the data

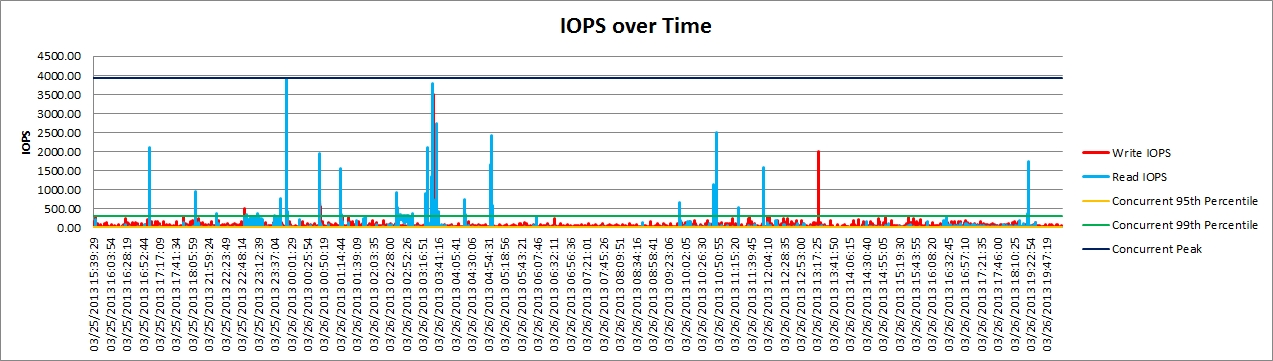

- IOPS over time

- Comprised of Write/Read IOPS over time with percentile analysis based on the highest values out of read, write or concurrent.

- This data will show what the IOPS load being experienced by the storage array, this data will be used to determine what kind of replacement storage array should be used.

- Block Size over time

- Comprised of Write/Read IOP Block Size over time, converted to KB.

- This data will show the average IOP block size that is experienced by the storage array. This data can help gauge what kind of storage array performance is needed.

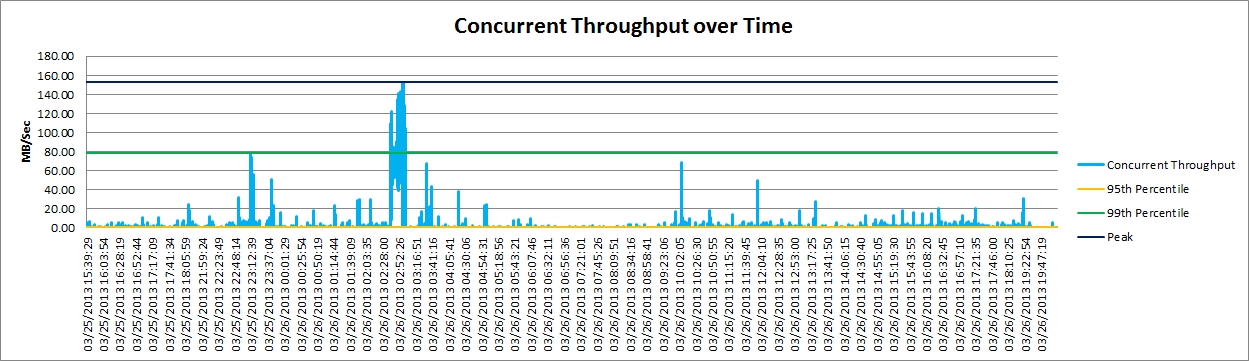

- Throughput over time

- Comprised of Write/Read Bytes/Sec over time, converted to MB/Sec. This data will determine the basic infrastructure needed to support the storage array.

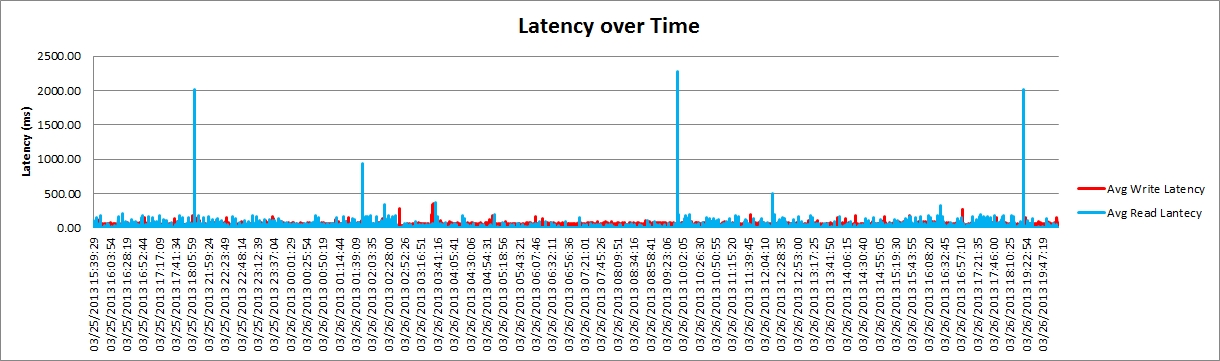

- Latency over time

- Comprised of Write/Read Latency over time, converted to millisecond.

- This data can be used to identify any latency errors. In my example – I did experience a lot of latency, close to 2000ms, when the CPU utilization is at 100%

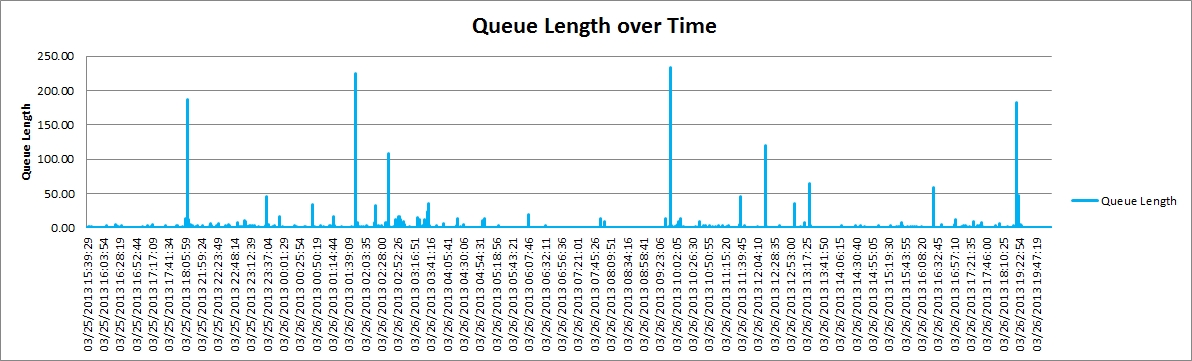

- Queue Length over time

- Comprised of Current Disk Queue Length

- This data, along with latency, can determine how efficient the storage array is at processing instructions. In my example, seeing a queue length of 250 instructions is an indication of bottleneck, which in my case is the CPU at 100% utilization

- CPU Utilization over Time

- This graph is used in conjunction with all other graphs in scale, to determine the processor utilization with respect to other aspects of the storage array.

- Memory Utilization over time

- This graph is used in conjunction with all other graphs in scale, to determine the memory utilization with respect to other aspects of the storage array.

- Summary Analysis

- My computer at 95th percentile uses less than 30 IOPS and the block size is typically less than 32KB. This data shows that if I needed to host my OS on a RackStation, a Synology XS Series is more than capable. The only times of concern is when the computer is conducting backups – which it spikes to 4000 IOPS and close to 160MB/Sec of throughput. If I were faced with a performance limitation on the storage, I would have to acknowledge the fact that my backups will take a little longer to complete. But for everyday use, a Synology XS Series RackStation is more than capable.

Summary

Once data is collected, IT Professionals can easily invest a couple of hours into analyzing and determining the current IOPS consumption on their existing Windows application server. Whether collecting data for one day or seven days, the process is the same. Using this data, the 95th percentile determines the basic storage needs of replacement storage. Personally, though, I would tack on another 20% of performance, just as a buffer.

Once data is collected, IT Professionals can easily invest a couple of hours into analyzing and determining the current IOPS consumption on their existing Windows application server. Whether collecting data for one day or seven days, the process is the same. Using this data, the 95th percentile determines the basic storage needs of replacement storage. Personally, though, I would tack on another 20% of performance, just as a buffer.

IT Professionals can quickly determine what they should consider looking for their storage array, whether it be a Synology RackStation loaded with mechanical drives or solid state drives. This information can also help IT Professionals run diagnostics on their existing Windows Storage Array to help look for any performance errors.

Further Reading

Synology Blog – How to measure IOPS for VMware

Synology Blog – How to measure IOPS for VMware

Blogger Comment

Facebook Comment